(Source: The New Republic, October 21, 1940)

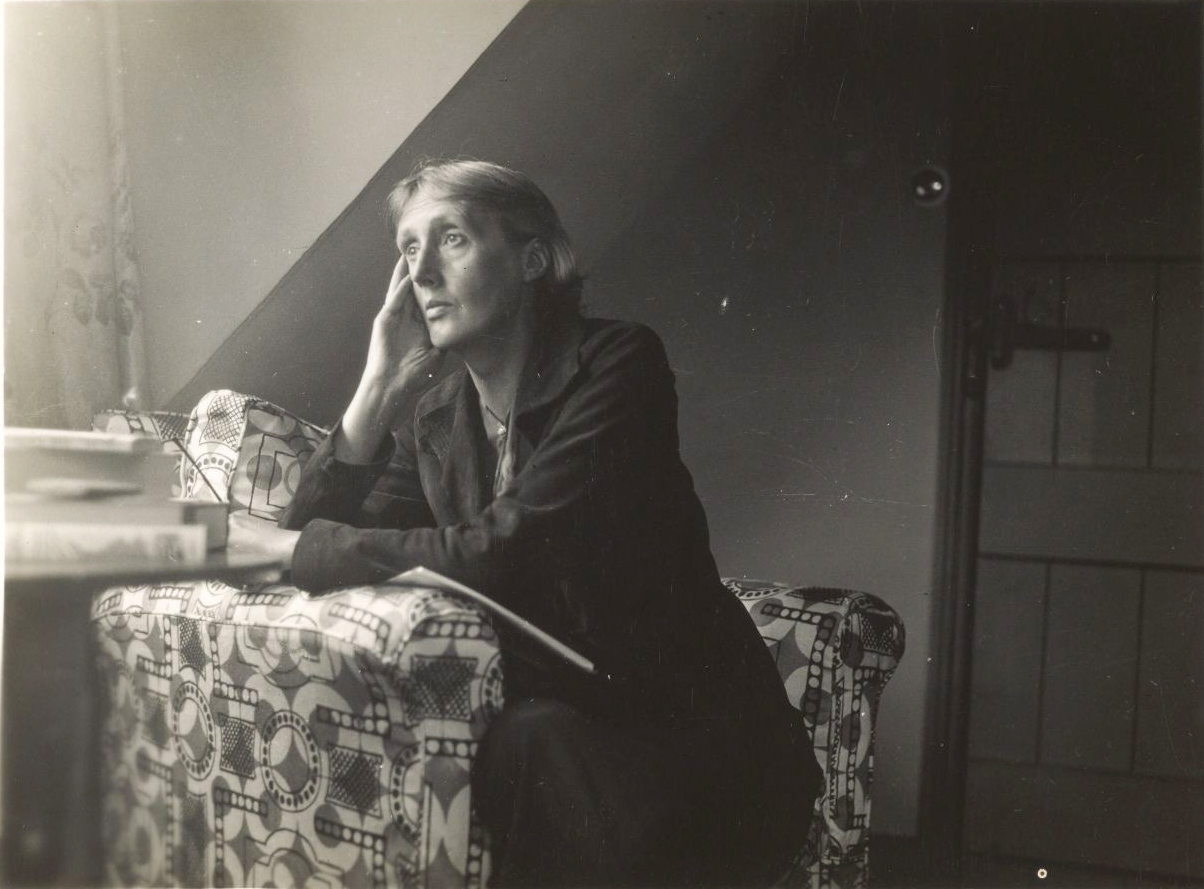

Source: Wikimedia Commons, from Harvard University Library

There is another way of fighting for freedom without arms;

we can fight with the mind. We can make ideas that will help the

young Englishman who is fighting up in the sky to defeat the enemy.

The Germans were over this house last night and the night before

that. Here they are again. It is a queer experience, lying in the dark

and listening to the zoom of a hornet which may at any moment

sting you to death. It is a sound that interrupts cool and consecutive

thinking about peace. Yet it is a sound—far more than prayers and

anthems—that should compel one to think about peace. Unless we

can think peace into existence we—not this one body in this one bed

but millions of bodies yet to be born—will lie in the same darkness

and hear the same death rattle overhead. Let us think what we can

do to create the only efficient air-raid shelter while the guns on the

hill go pop pop pop and the searchlights finger the clouds and now

and then, sometimes close at hand, sometimes far away a bomb

drops.

Up there in the sky young Englishmen and young German men are

fighting each other. The defenders are men, the attackers are men.

Arms are not given to Englishwomen either to fight the enemy or to

defend herself. She must lie weaponless tonight. Yet if she believes

that the fight going on up in the sky is a fight by the English to

protect freedom, by the Germans to destroy freedom, she must

fight, so far as she can, on the side of the English. How far can she

fight for freedom without firearms? By making arms, or clothes or

food. But there is another way of fighting for freedom without arms;

we can fight with the mind. We can make ideas that will help the

young Englishman who is fighting up in the sky to defeat the enemy.

But to make ideas effective, we must be able to fire them off. We

must put them into action. And the hornet in the sky rouses another

hornet in the mind. There was one zooming in The Times this

morning—a woman’s voice saying, “Women have not a word to say

in politics.” There is no woman in the Cabinet; nor in any

responsible post. All the idea makers who are in a position to make

ideas effective are men. That is a thought that damps thinking, and

encourages irresponsibility. Why not bury the head in the pillow,

plug the ears, and cease this futile activity of idea making? Because

there are other tables besides officer tables and conference tables.

Are we not leaving the young Englishman without a weapon that

might be of value to him if we give up private thinking, tea-table

thinking, because it seems useless? Are we not stressing our

disability because our ability exposes us perhaps to abuse, perhaps

to contempt? “I will not cease from mental fight,” Blake wrote.

Mental fight means thinking against the current, not with it.

The current flows fast and furious. It issues in a spate of words from

the loudspeakers and the politicians. Every day they tell us that we

are a free people, fighting to defend freedom. That is the current

that has whirled the young airman up into the sky and keeps him

circling there among the clouds. Down here, with a roof to cover us

and a gas mask handy, it is our business to puncture gas bags and

discover seeds of truth. It is not true that we are free. We are both

prisoners tonight—he boxed up in his machine with a gun handy; we

lying in the dark with a gas mask handy. If we were free we should be

out in the open, dancing, at the play, or sitting at the window talking

together. What is it that prevents us? “Hitler!” the loudspeakers cry

with one voice. Who is Hitler? What is he? Aggressiveness, tyranny,

the insane love of power made manifest, they reply. Destroy that,

and you will be free.

Photo: linked from AP / New York Times

The drone of the planes is now like the sawing of a branch overhead.

Round and round it goes, sawing and sawing at a branch directly

above the house. Another sound begins sawing its way into the

brain. “Women of ability”—it was Lady Astor speaking in The Times

this morning—“are held down because of a subconscious Hitlerism

in the hearts of men.” Certainly we are held down. We are equally

prisoners tonight—the Englishmen in their planes, the

Englishwomen in their beds. But if he stops to think he may be

killed; and we too. So let us think for him. Let us try to drag up into

consciousness the subconscious Hitlerism that holds us down. It is

the desire for aggression; the desire to dominate and enslave. Even

in the darkness we can see that made visible. We can see shop

windows blazing; and women gazing; painted women; dressed-up

women; women with crimson lips and crimson fingernails. They are

slaves who are trying to enslave. If we could free ourselves from

slavery we should free men from tyranny. Hitlers are bred by slaves.

A bomb drops. All the windows rattle. The anti-aircraft guns are

getting active. Up there on the hill under a net tagged with strips of

green and brown stuff to imitate the hues of autumn leaves guns are

concealed. Now they all fire at once. On the nine o’clock radio we

shall be told “Forty-four enemy planes were shot down during the

night, ten of them by anti-aircraft fire.” And one of the terms of

peace, the loudspeakers say, is to be disarmament. There are to be

no more guns, no army, no navy, no air force in the future. No more

young men will be trained to fight with arms. That rouses another

mind-hornet in the chambers of the brain—another quotation. “To

fight against a real enemy, to earn undying honor and glory by

shooting total strangers, and to come home with my breast covered

with medals and decorations, that was the summit of my hope…. It

was for this that my whole life so far had been dedicated, my

education, training, everything….”

Those were the words of a young Englishman who fought in the last

war. In the face of them, do the current thinkers honestly believe

that by writing “Disarmament” on a sheet of paper at a conference

table they will have done all that is needful? Othello’s occupation will

be gone; but he will remain Othello. The young airman up in the sky

is driven not only by the voices of loudspeakers; he is driven by

voices in himself—ancient instincts, instincts fostered and cherished

by education and tradition. Is he to be blamed for those instincts?

Could we switch off the maternal instinct at the command of a table

full of politicians? Suppose that imperative among the peace terms

was: “Childbearing is to be restricted to a very small class of

specially selected women,” would we submit? Should we not say,

“The maternal instinct is a woman’s glory, It was for this that my

whole life has been dedicated, my education, training, everything.”

…But if it were necessary for the sake of humanity, for the peace of

the world, that childbearing should be restricted, the maternal

instinct subdued, women would attempt it. Men would help them.

They would honor them for their refusal to bear children. They

would give them other openings for their creative power. That too

must make part of our fight for freedom. We must help the young

Englishmen to root out from themselves the love of medals and

decorations. We must create more honorable activities for those

who try to conquer in themselves their fighting instinct, their

subconscious Hitlerism. We must compensate the man for the loss

of his gun.

The sound of sawing overhead has increased. All the searchlights

are erect. They point at a spot exactly above this roof. At any

moment a bomb may fall on this very room. One, two, three, four,

five, six … the seconds pass. The bomb did not fall. But during those

seconds of suspense all thinking stopped. All feeling, save one dull

dread, ceased. A nail fixed the whole being to one hard board. The

emotion of fear and of hate is therefore sterile, unfertile. Directly

that fear passes, the mind reaches out and instinctively revives itself

by trying to create. Since the room is dark it can create only from

memory. It reaches out to the memory of other Augusts—in

Bayreuth, listening to Wagner; in Rome, walking over the

Campagna; in London. Friends’ voices come back. Scraps of poetry

return. Each of those thoughts, even in memory, was far more

positive, reviving, healing and creative than the dull dread made of

fear and hate. Therefore if we are to compensate the young man for

the loss of his glory and of his gun, we must give him access to the

creative feelings. We must make happiness. We must free him from

the machine. We must bring him out of his prison into the open air.

But what is the use of freeing the young Englishman if the young

German and the young Italian remain slaves?

The searchlights, wavering across the flat, have picked up the plane

now. From this window one can see a little silver insect turning and

twisting in the light. The guns go pop pop pop. Then they cease.

Probably the raider was brought down behind the hill. One of the

pilots landed safe in a field near here the other day. He said to his

captors, speaking fairly good English, “How glad I am that the fight

is over!” Then an Englishman gave him a cigarette, and an English

woman made him a cup of tea. That would seem to show that if you

can free the man from the machine, the seed does not fall upon

altogether stony ground. The seed may be fertile.

At last all the guns have stopped firing. All the searchlights have

been extinguished. The natural darkness of a summer’s night

returns. The innocent sounds of the country are heard again. An

apple thuds to the ground. An owl hoots, winging its way from tree

to tree. And some half-forgotten words of an old English writer

come to mind: “The huntsmen are up in America….” Let us send

these fragmentary notes to the huntsmen who are up in America, to

the men and women whose sleep has not yet been broken by

machine-gun fire, in the belief that they will rethink them

generously and charitably, perhaps shape them into something

serviceable. And now, in the shadowed half of the world, to sleep.

David Gange's The Frayed Atlantic Edge is subtitled A Historian's Journey from Shetland to the Channel. Both title and subtitle bear plain-language meanings and metaphor.

David Gange's The Frayed Atlantic Edge is subtitled A Historian's Journey from Shetland to the Channel. Both title and subtitle bear plain-language meanings and metaphor.

In The New York Times recently (December 24, 2021), Julie Lasky (real estate beat)

In The New York Times recently (December 24, 2021), Julie Lasky (real estate beat)